Portfolio

Overview

-

MEng Computer Science, Bristol University

-

11 Years Professional Game Development Experience

-

Experience with Unreal Engine (expert), Unity (essentials), and propriety game engines

-

Lead Engineer on multiplayer networked VR projects in Unreal

-

Trained Web Application Penetration Tester

-

Experienced in C++, Python, Java, C#, Go, NodeJS, JavaScript, PHP, Docker, Heroku, Git, Perforce, TeamCity, Jenkins, Code Review Software

-

Senior Principal/Lead Programmer. Managing team workload, code reviews, project architecture, communicating with publishers/clients, milestone documentation

-

Profiling of CPU and GPU (analysis, report, delegation of work, and then confirm improvements worked)

-

Deep dive debugging - running debug tools for mobile platforms, solving complex crashes and bugs

-

Consulting with non-coders to update project-wide dependencies and give implementation advice from a technical perspective

Video Highlights

Ghostbusters: Rise of the Ghost Lord

nDreams - TBA 2023 (Oculus Quest 2 and PlayStation 5)

Senior Principal (Lead) Programmer on Ghostbusters VR for Oculus Quest 2 and PlayStation VR 2 (PlayStation 5). Built in Unreal Engine.

- Manage the Engineering team for the project and time planning

- Architectural design and review of team's commits

- Automated testing and continuous integration setup and maintenance

- DevOps setup (TeamCity and Unreal Game Sync - now used across nDreams studios), build management

- Profiling and optimisation strategies

- Modifications to Unreal Engine's build pipeline, editor improvements, fixes for Quest platform

- Handle merging engine changes and combining Epic, Oculus/Meta, and Sony's forks of Unreal Engine

- Release management to storefronts

- Milestone documentation and client communication

- AWS cloud management (maintainance of FlexMatch setup) and Epic Online Services integration

- Unreal Engine integration for Localisation, Significance Manager, Bink Video, Level Streaming, Debug Tools, Analytics, Loading Screens, Linux server packaging

Phantom: Covert Ops

nDreams - June 2020 (Oculus Rift & Oculus Quest)

Principal (Lead) Programmer on Phantom: Covert Ops for Oculus Quest and Oculus Rift built in Unreal Engine 4.21.

This title is published by Oculus Studios and was announced early in the Oculus Quest launch window to high-praise from the press, including "VR/AR Game of the Year 2019" at E3 from the official judges panel.

- Code architecture design for the project

- Time planning and schedule management for Code-driven elements of Phantom

- Working with Production to plan work for the Code team

- Write technical specifications for tasks based on Designer requirements and technical limitations

- Mentoring and Guidance To Code Team

- Run regular Code Meetings with team to address concerns and inform on projet/company updates

- Guidance on Unreal Engine code structure and design

- Run Junior Programmers quarterly reviews

- Code Leadership on Project

- Work closely with Game Director and Production to plan out work, time estimate each feature, and review feature specifications

- Suggest alternatives to features to avoid potential future issues, or improve user experience where issues are identified

- Present use of technology with clients when requested

- Collaboration with other project principles to handle cross-discipline requirements

- Work with QA team to help add debugging tools to speed up testing time

- Make sure QA always have builds to smoke test and perform regression on

- Project Recruitment

- Run Screening Calls and Technical Interviews for Phantom and future title hires

- Project Build Setup

- Internal TeamCity install, integrated with internal LDAP authentication

- Build scripts to automatically cook, nativize, and compile project into builds

- Unit tests to check some core project features work after a build

- "Cell Tool" Unreal Editor tooling and runtime streaming

- Allows users to "cut" the world into Cells in-editor through a UI panel

- Tools allow quickly moving all static geometry into the cells

- Cells are streamed in and out as the player moves around the map

- Cells are then baked into simplified Hierarchical LODs, which are used when the cell is streamed out

- Doubles as a cheap occlusion system, used to cull any non "Celled" assets automatically

- Dynamic Blueprints register to "Cell Events" to disable logic and visual primitive components, acting as an effective manual occlusion system for dynamic props

- Built override volumes to allow modifying Cell states (loaded, HLOD level, unloaded, etc.) at runtime for detailed control over how the level loads

- Localisation Setup using UE4's FText framework

- Localisation integration with FMOD for localised audio

- Custom subtitles renderer for VR compatible with PC and Quest (Stereo Layer on PC and Quad on Oculus Quest)

- Significance Manager Integration

- Built on top of the Unreal Engine Significance Manager

- Allows tight control of how many of each object type are allowed to process each frame

- Performance Profiling

- Performed regular CPU captures of the title on Oculus Quest, determined which areas need optimising and general strategies to be taken

- Dependency and loading profiling, resolving implementations using hard asset references causing long loading times

- Optimisation Direction for Game Systems

- Introduced round-robin style processing for enemy AI

- Process a fixed amount of enemies each frame, prioritised through multiple sliding windows based on proximity to the player

- Similar approaches applied throughout the project to dynamic light cones, security cameras, etc. to guarantee a constant maximum frame cost on the Oculus Quest's CPU

- Automated Profiler

- Built automated profiler to capture CPU, Draw, and GPU stats throughout each game mission

- Exports to CSV on PC or Quest - loosely based on ProfileGo tool fron Fortnite

- Optionally take screenshots at each capture location

- Configurable in Blueprint to override capture locations, settle time, capture time, etc.

- Broadcasts events to Blueprint when specific capture has started to allow for dynamic set-piece profiling

- Used daily by QA to evaluate problem areas of the game for targetting optimisation by Environment Artists

- Save Game framework

- Allows any UObject to save its state

- Data is restored during runtime without resetting the scene, allowing less than half a second to restore game state from player's last checkpoint

- Built as a reusable plugin for future nDreams titles

- Integrated into the Oculus Cloud Save system to allow cross-platform saves between Oculus Quest and Rift (feature built with this in mind from inception, working with platform-specific files with varying filenames correctly)

- Dialogue Plugin

- FMOD compatible Dialogue plugin

- Plays dialogue on actors (actors cannot say more than one line at a time)

- Allows multiple levels of priority and custom latent Blueprint nodes to receive Blueprint callbacks when dialogue has finished or been interrupted

- Event Tracker

- Track all important actions the player performs during any mission

- Can be filtered and search at the end of the mission to build statistics about the player's run-through

- Can optionally pass whitelisted events to the Analytics servers to track player activity for future title research

- Debug Menu Backend

- During development a debug menu was available to control portions of the game

- Dynamically built by scanning the project for appropriate console variables

- Based on plugin that exposes C++ Console Variables to Blueprint so the debug menu can present appropriate UI for control (eg, a toggle or integer input)

- Loading Screen

- UMG-powered loading screen with C++ backend that is presented on a stereo layer in VR

- Receives rough percentage progress from cell streaming backend to update graphic

- Can optionally block level start if important information needs to be shown for longer than loading takes

- Video Streaming

- Using in-built Unreal video management

- Wrote FFMPEG commands to compress into reasonable filesizes in codec suitable for both Windows and Android

- PhysX Debugging

- Debugging an Android PhysX crash using Physx Visual Debugger and Unreal Engine modifications

- Oculus Platform Integration

- Oculus Leaderboard Manager

- Entitlement Checks

- Oculus Cloud Storage for Cross-Platform (PC and Android) save game support

- Achievements Tracking

- DLC Support

- Hot-loading of additional maps through DLC files

- Dynamic detection of new content through patches without full game redistribution

- Speculative forward-thinking implementation in case DLC is requested post-launch

- Various adjustments to the Unreal build tools to enable fully fledged DLC support for Android

Links:

ThemeParks.wiki

Personal Project (GitHub)

A reverse-engineering project to monitor theme park attraction wait times around the world. Most resorts have their own API and format for their wait times API for use in the mobile application and/or park-signage, so this library gathers the various data structures and transforms them all into a single easy-to-digest JSON format that is now consistent between all resorts.

The motivation behind this project is to monitor park wait times for some personal projects and extreme data analysis for some vacation planning. My library is now used by the majority of the unofficial wait time sources for Disney World and many other parks. I have worked with various popular websites specialising in theme park wait time data analysis to help them update with API changes.

Project has taught me:

- Advanced Git management

- Pull Request and Issue management (rejecting and accepting Pull Requests carefully and politely)

- Proficient JavaScript (NodeJS) knowledge

- Careful API design and Semantic versioning

- Automated testing for an open-source project

- Various methods of data security, how to build them and how they can be circumvented

- SSL traffic spoofing and proxying

- How many amazing themed resorts there are in the world (and how many I still need to visit)

Defunctland VR

Defunctland VR Team - 2018 onwards

Lead Programmer on fan project to recreate various "defunct" theme park attractions in Unreal Engine 4. This project is part of the Defunctland YouTube channel, although the team working on this project are largely independent from the YouTube channel and are self-orchestrated.

The team is built of passionate artists with little game development experience, so part of my role is to try and help educate other members in how to build game-ready assets, requiring me to get more involved in the Art pipeline than I usually am professionally.

Built various systems and features:

- Networking-capable player movement in VR and 2D

- Networking-capable spline-based ride vehicle with synchronized audio track to match up with the ride progress

- Performance reviews of Art assets, trying to identify how to improve rendering time

- 360 Panoramic Capture tool modifications to export ride as a 360 YouTube video

Defunctland VR 20,000 Leagues was released early 2021 featuring a full recreation of classic Disneyland ride "20,000 Leagues Under The Sea", with other rides to follow later in both 360 video and fully interactive Unreal project experiences.

Various VR Titles (on-hold / private / cancelled)

nDreams

Various titles at nDreams that have been work-for-hire (private), on-hold, or cancelled. These were all built in Unreal Engine 4 and often included VR multiplayer support.

During this time I learnt advanced Unreal Engine 4 networking knowledge, and led a small team to ship a private highly polished proof-of-concept title for a client that included complex VR networking capabilities. This demo was actively used by the client for many years, and is still utilized internally as a key part of the nDreams portfolio.

Danger Goat

nDreams - November 2016 (Google Daydream)

Danger Goat is a launch title for Google’s Daydream VR headset built in Unity 5. I was working on a separate title for the majority of Danger Goat's development, but a sizable amount of my early contributions during prototyping ended up in the final title.

My contributions:

-

Early game prototyping, building 3D grid path-finding and initial character movement (this prototype work ended up shipping with the game)

-

Bug fixing when team was short on staff

-

Integrated the in-house nDreams Analytics service into the project

-

Build machine setup and maintenance

Links:

VR Game Port (cancelled)

nDreams

Worked with proprietry game engine from another development studio to port existing very successful AAA game to VR. Integrated support for the Vive VR headset and motion controlllers.

Built working technical demo, but ultimately project wasn't continued due to external client factors.

The Assembly

nDreams - July 2016 (Steam and Oculus) | October 2016 (PlayStation 4) | December 2016 (Motion Controller Update)

The Assembly is an adventure game set in an morally ambiguous scientific organization.

This game was created in Unreal Engine 4.5, upgraded through each release during development to 4.13. Game initially released in Unreal Engine 4.11, then updated to 4.13 through patches to add motion controller support for all platforms.

My contributions:

-

Early game prototyping in Unity and Unreal Engine 4

-

Key Game Mechanics and Framework

-

Base player setup

-

Interactive objects

-

Core framework for objects to receive interaction from players, optionally overridden per-object or per-instance in Unreal

-

Openable objects - doors, drawers, fridges etc.

-

Outline effect material for highlighted objects

-

-

Save game system - reflects against Design-chosen propeties to save and restore

-

Dialogue system

-

Multiple channels of dialogue, allowing overlapping background speech to drop in volume when characters are speaking

-

Broadcast dialogue from multiple sources for 3D audio effects

-

Interupt actors based on priority settings

-

Integrated into localisation system to support subtitles and custom audio localisation system (audio localisation unsupported in Unreal Engine 4 at the time)

-

Reverse engineered chosen legacy localisation service so localisation updates could be automated (previously done by hand taking upwards of 30 minutes each time to process manually)

-

-

-

“The Box Puzzle”, Chapter 3 - taken from Design prototype to Code-based playable level. Dynamically creates puzzles from Unreal Engine 4 data assets

-

The game’s lift - created in Blueprint to handle all Design situations

-

Prototyping various forms of the microscope mini-game

-

Setup NPC Animation Blueprints, path systems, and navigation Blueprint libraries for Design to use

-

Achievements integration on Steam and PlayStation 4

-

Credits Systems - VR-friendly credits Blueprint for the end-of-game

Quickly adapted to be placed on monitor in main menu screen as well

-

Custom analytics solution - created reusable Unreal plugin for our in-house nDreams Analytics service

-

Added motion controller support post-launch (Vive and Oculus Touch), requiring redevelopment of many of the core game systems

-

-

Build Tools

-

Over-night in-house build farm (Jenkins)

-

Daily automated performance run-throughs with custom report web interface (NodeJS) to identify areas for improvement

-

Console patch support for Unreal Engine 4

-

Automated localisation updates in Unreal Engine 4 (in-house custom integration with LocalizeDirect)

-

Configuration tools for creating multiple SKUs of the game ready for shipping (NodeJS)

-

-

Game and Engine optimisations

-

Studying daily performance reports to find framerate issues

-

Identifying performance issues and debugging expensive scenes in Unreal Engine 4

-

Working with Epic Games to patch Unreal Engine 4 for our use

-

Links:

AlphaZone4

2008 - 2015

Very popular fansite for PlayStation Home, attracting largest user base of PlayStation Home sites.

Developed a large suite of tools in both PHP and NodeJS to manage database consisting of every item and location in PlayStation Home, consisting of over 68,000 entries.

Reverse engineered application to gather data sets to update website in an automated fashion. Parsed unreliable inputs, sanitised, and fed into database.

Held regularly live events within PlayStation Home with giveaways and competitions. Managed team of 3-7 people on site to keep content up-to-date.

Maintained good relationship with PlayStation Home content developers, maintaining NDAs, assisting with developer marketing and promotions.

Featured on the PlayStation Blog.

Built in PHP, HTML, JavaScript, NodeJS.

Blood Minerals

nDreams (Android and iOS - never released)

Blood Minerals is an Android and iOS mobile adventure game to spread awareness of issues in the Democratic Republic of Congo (DRC).

nDreams took over the project internally after external development halted. We worked to optimise all the game assets and rewrote large portions of the game code to allow the game to run on mobile devices.

This was eventually released as a collection of audio books, and the interactive game abandoned.

Links:

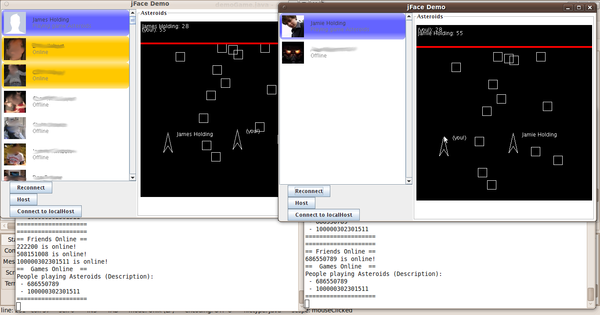

SkyDIEving

nDreams - August 2013 (PC Oculus Rift DK1)

SkyDIEving is nDreams’ first VR tech demo. It was released for free in August 2013, shortly after the Oculus Rift DK1 kits were available.

An artist at nDreams put together the initial artwork, and I was then responsible for implementing the game itself. During development, I also added multiplayer support so people could skydive together.

Later on when the Oculus Rift DK2 was released, I updated the codebase to work with the newer headset and re-released the tech demo.

Links:

Gossip Column

nDreams - November 2013 (Android)

This was part of nDreams’ second wave of mobile games, that sadly never got released outside of a few select trial countries.

This game was made in Unity 4, and was a word scrabble-style game where you must make words from the random assortment of letters given to you.

Gossip Column featured significant Facebook integration, allowing highscore tables and jaunts against friends.

My Contributions:

-

Primary coder on Gossip Column

-

Advanced use of Unity UI plugin NGUI

-

Facebook social integration and IAPs

-

Dynamic main menu that pulled content from nDreams.com to promote IAP sales or other nDreams titles

Links:

PubPlay

nDreams - August 2013 (iOS)

Developed various mini-game with staff at nDreams.

My Contributions:

-

Developed Smashed! - Balance bottles on a tray using the phone’s gyroscope

-

Setup user interface using NGUI

-

Created front-end menu interface

-

Integrated In-App Purchases (IAP) into Unity for iOS

- Wrote IAP verification server scripts to validate purchases

-

Setup Facebook integration

Links:

Netcraft

September 2011 - March 2013

Worked as an "Internet Services Developer" helping to maintain tools, add new features, write public-facing blog posts, and perform penetration tests of web applications for clients.

Main roles:

-

Maintaining automated phishing-site detection systems

- Written in Perl and MySQL, Git version control, servers managed with Puppet

- Added redirect support to capture complex redirection trails trying to cover up phishing sites and to try and stop user-submissions from gaming our monthly competition by submitting the same site multiple times from different redirects

- Added "Phish Kit" analysis that would automatically analyse phishing kit source code for attacker credentials or any other potentially useful insights to help detect the same phishing template more easily or identify the attacker

- Skills learnt: MySQL optimisation/management, Puppet server deployment, Perl, Regex, advanced Git usage and management

-

Penetration Tester

- Performed web application penetration testing against various external sites for clients

- Included popular highstreet bank's public facing site, scientific research management app, popular online jewelry store, and a agricultural resource management app

- Combination of off-the-shelf tools (SQLMap, nmap, etc.) and in-house tools I would help to maintain to perform some automated scanning to look for common issues and detect publically known CVE through advanced software version detection

- Each report had hand-written analysis of every possible vulnerability, possible entry-points for attach, and mitigation recommendations

- Reports often over 100 pages with executive-facing summaries for client to understand the contents of the report, as well as deeper insights for technical staff to mitigate issues

- Produced sample code where relevant to demonstrate exploits

- Kept up-to-date with new exploits and attack vectors (and still do)

- Skills learnt (non-exhaustive): SQL Injection, XSS, CSRF, frame jacking, open redirects, Burpsuite, OWASP, session prediction, cookie/session injection, professional report authoring

-

Takedown Monitoring

- Outside of soft-blocking all phishing sites on the internet, Netcraft offer a service where we would manually get phishing sites taken down for our clients

- This involved contacting web-hosts, domain registers, local law enforcement, email providers, etc. to get the phishing site removed as soon as possible

- To be successful at this, overcoming language barriers and clear communication was essential since many phishing sites are hosted in non-English speaking countries

- Skills learnt: telephone communication across language barriers, explaining complex technical issues to support staff, knowing when to mention things like "FBI" to escalate the issue without pushing too hard, knowledge of law enforcement priorities when it comes to phishing attacks

University of Bristol

MEng Computer Science - Graduated 2011

I completed the Masters in Computer Science in 2011 at the University of Bristol.

University Projects/Work Highlights:

- My thesis: Predicting the stock market using real-time sentiment analysis of Twitter through neural networks (and it kind of worked too)

- Third-year Group Project: Interactive motion-simulator game (I have written more about this below)

- Layered material based Raytracer in Java

- Basic Maya usage, skinning, animation, and rendering

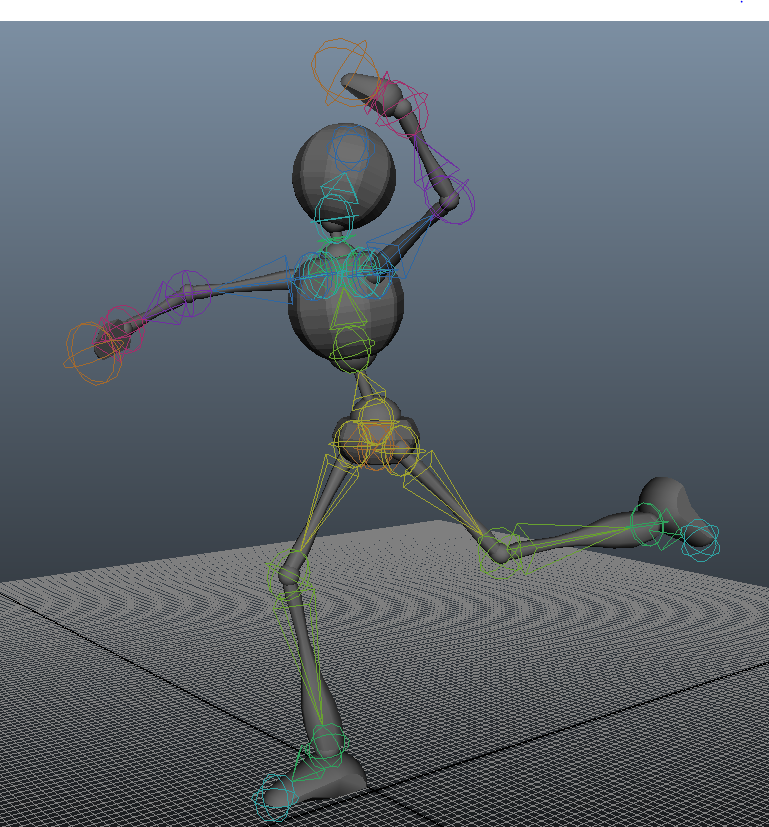

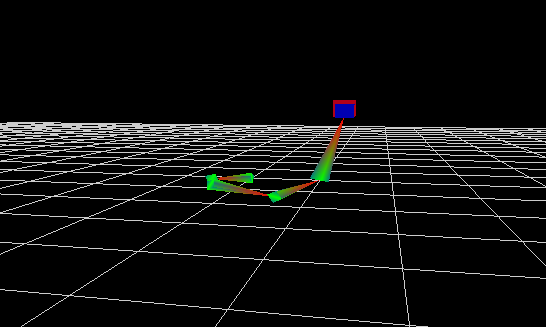

- IK Solver written in C++ using OpenGL for visualisation

- Robotics - LEGO maze solving robot written in Matlab

- Computer Vision - aid a computer in understanding stereo imagery and identifying objects using OpenCV

- Various programming languages and their applications (C, C++, Haskell, Java, Verilog, Assembler, ARM) - I have found it very easy to learn new programming languages since I completed the course

My thesis: http://cdn.jamie.holdings.s3-website-eu-west-1.amazonaws.com/Thesis.pdf

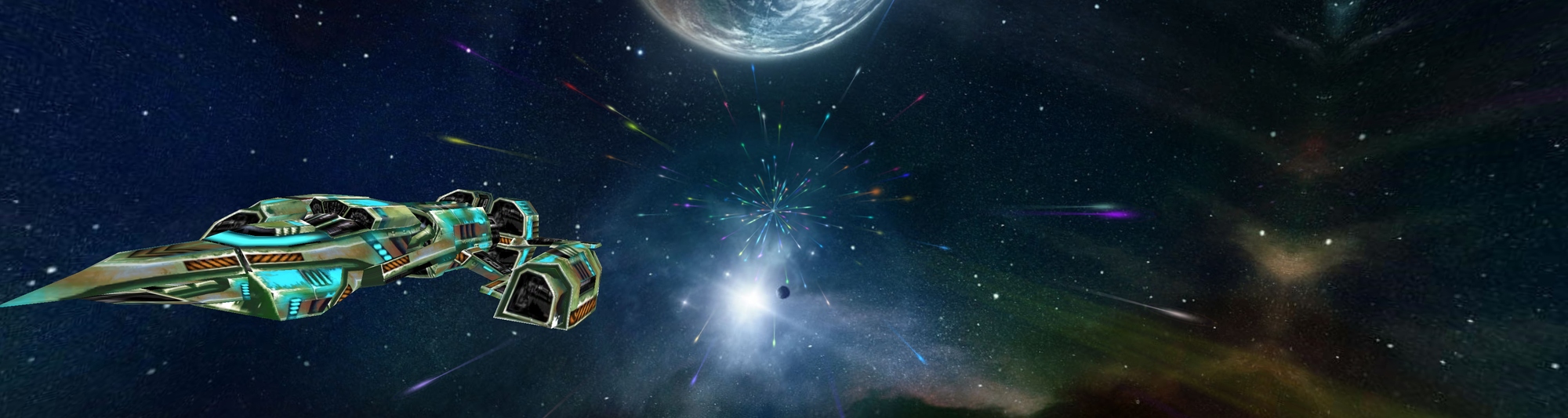

Motion Simulator Project

This was using a MotionBase simulator using 6 hydraulic legs. The hardware required drivers that only ran on an older Windows machine that could not support modern graphics cards, requiring us to build the project with networking support so we could render the game on a different machine to the one that was controlling the simulator. Running over the network required some latency compensation and smoothing to ensure players were not nauseous when playing.

Our game was a 3D "side-scroller" where you fly through an asteroid field to reach a portal. Players are in control over the movement of the ship as they continue to fly in the general goal direction. Shooting asteroids clears obstacles and awards points. There was an optional mode where a second player outside of the simulator could control a gun turret on top of the spaceship and help to destroy asteroids, however we felt this made the game too easy and lessened the experience for the pilot inside the simulator, so we never demonstrated this part of the game.

The game was built on top of Ogre, which only handles the rendering. We had to build the game inputs, particle systems, networking library, audio library etc. ourselves.

I was responsible for the networking and control of the motion simulator. I built-in various safety measures to the title to ensure the simulation could be stopped at any moment if needed. The motion-simulator PC ran as a state machine that could optionally subscribe to the networked game session. A single keyboard input would calmly stop the simulation (independent of the game) if anything were to go wrong or a player felt woozy. Our lecturers said it was the most comfortable simulator experience they had ever tested when we demonstrated the project.

Some images from University projects

Loricum

MED Theatre - Summer 2010

“[…] sweet adventure with a distinctive art style and an intriguing, very personal storyline with three very different possible conclusions.” - adventuregamers.com

Loricum is a point-and-click style adventure game based on a play called “Loricum”. I lead mentoring on this project, teaching programming skills to the young people involved, and assisting with game design.

Loricum is written in Adventure Game Studio (AGS), and was written over the summer holidays of 2010 with local young people aged 13-18.

The game is available free on the MED Theatre website, and also as a hard-copy at MED Theatre.

My Contributions:

-

Lead consultant and mentor to youth theatre group in Game Design and Computer programming

-

Created initial game template and assisted resolving bugs, but ensured the young people wrote the vast majority themselves

-

Created installer software for CD-ROM distribution

-

Consulted with art and audio direction from early development through to importing into game engine

- Video Game Development from planning to completion

- Mentoring young people and development of new skills

- Basic animation principles to build character walk cycles and animated introduction film

- Building publishable CD-ROM with game installer

Skills Learnt:

Links:

Facebook Chess

May 2007

Built the first Chess application on Facebook, one of the first 100 apps to ever exist on Facebook.com.

Grew to support hundreds of thousands of active monthly players. Sold to Chess.com when I started university.

Built in PHP and MySQL.

Skills Learnt:

- Building scalable/optimised PHP applications for hundreds of thousands of users

- JavaScript/AJAX to speed up game times to simulate a live game session before modern live web applications were possible

- How to compling to Facebook information privacy/security requirements to not store personal data etc.

- Complex CSS to render chess board easily within Facebook's limited application space (across lots of different device)

- Player management - shadow-banning abusive users, maintaining integrity of Chess leaderboard

- Ranking systems and tournament management (I used ELO ranking here)

- Forum moderation - dealing with bad language, harassment, bullying and violent threats from users towards myself and other forums users (before sold to Chess.com when it was just me running the application)

- Release strategy - pushing features live without breaking the application while live games were being played

Skills Not Learnt:

- How to play chess at a decent level

Lost Roots

MED Theatre - 2007

Lost Roots is a short drama/educational film discussing the issue of “alien” beech trees on Dartmoor. I directed and edited the film, and helped with storyboarding/script/music.

Lost Roots won an award at the MotionPlymouth Film Festival.

Skills Learnt:

- Storyboarding and script development

- Directing the shoot

- Camera control and audio capture (capturing atmos, music, and dialogue effectively)

- Video Colouring/Tinting to maintain balance throughout scenes when they were shot throughout the day

- Shot scheduling and planning (checking weather maps and estimating setup time to build a 1 day outside shooting schedule that would get us all the shots we needed)

- Video editing (Final Cut Pro)

- Photoshop - I drew the "Lost Roots" logo (the character spacing irritates me now I look back on it)

Links: